Taking care of your test automation health

This blog post is heavily inspired by Mark Fewster‘s talk at the 2016 Test Automation Day. I believe that the topic of test automation health is an important yet often overlooked subject, so I decided to dedicate a blog post to it. Many of the concepts have been borrowed from his talk, so Mark, in the unlikely case you’re reading this, thanks! The images included in this blog post are pictures I took from some of his slides at TAD as well.

So, some time ago, you decided to create (or were given the task of creating) a test automation solution to support your testing activities. You started designing, building and testing your solution (you did all that, right?), you implemented some tests and then demonstrated the solution to your team and to management. Cue thunderous applause. That was some time ago, and thinking back you realize that it pretty much all went downhill from there. Even though you added a lot more tests, increasing your test coverage and approaching the magic / ridiculous (strike out what does not apply) 100%, trust in your solution has dwindled. Tests are starting to get flaky, maintenance has become an ever increasing burden and you even start overhearing some people discussing ignoring the automated test results altogether ‘because frankly, I don’t trust them’.. What went wrong? And what could you have done to prevent this from happening?

Chances are that you got carried away adding more and more tests to your solution, ignoring a vital part of test automation development: the health of your solution itself. It’s a bit like becoming a decent distance runner: you can’t do that simply by running a little further every day. If you use that approach, you’ll soon get burnt out and feel tired permanently. It’s better to take a rest day every now and then, get a sports massage and buy a new pair of shoes when the old ones are no longer performing well.

The same goes for building upon your initial test automation solution: if you don’t take regular stock of how your solution is performing and take the time to do the necessary updates and alterations to make it run smoothly again, the risks of it getting tired and performing suboptimal will increase by the day.

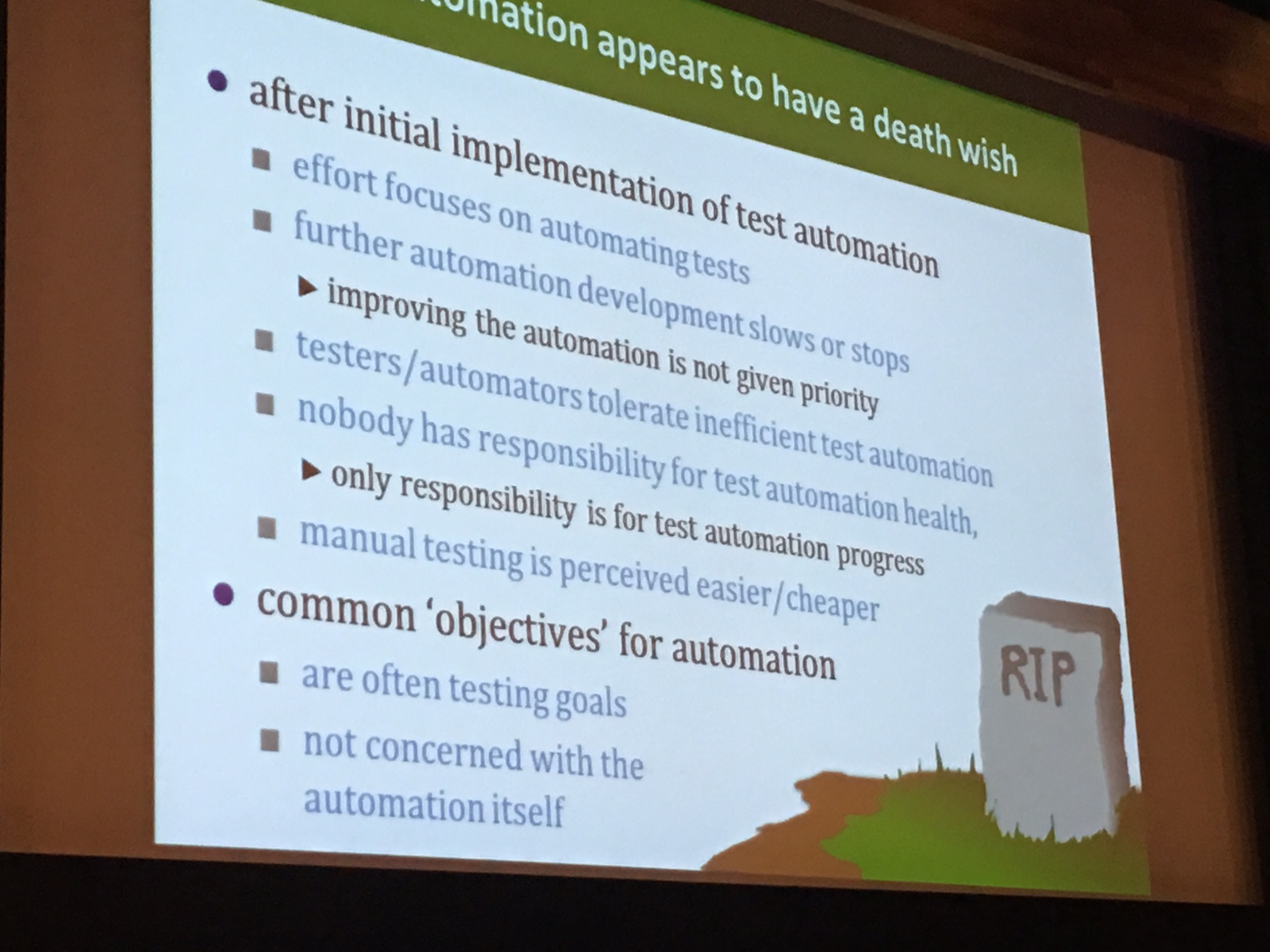

But what causes this phenomenon in the first place? I can think of a couple of reasons..

Production code and test automation code are not treated equally

Let’s make some assumptions:

- You want your production code to run in a stable, predictable and well performing manner.

- You want to be able to roll back to a previous version of your production code in case something bad happens.

- You want your production code to be clean, conforming to code standards and well maintainable.

Nothing out of the ordinary or too demanding, no? What if we replace ‘production code’ with ‘test automation code’ in the list above? Do the assumptions still hold? If not, there’s work to be done. Boldly put: your test automation code is just as important as your production code. You and your organizarion rely on your test automation code to inform you about the quality of your production code. Go / no-go decisions for deployment into production are made (partly or wholly) based on the outcome of the checks defined in your test automation code. Shouldn’t this code be treated at least as carefully as your production code, then?

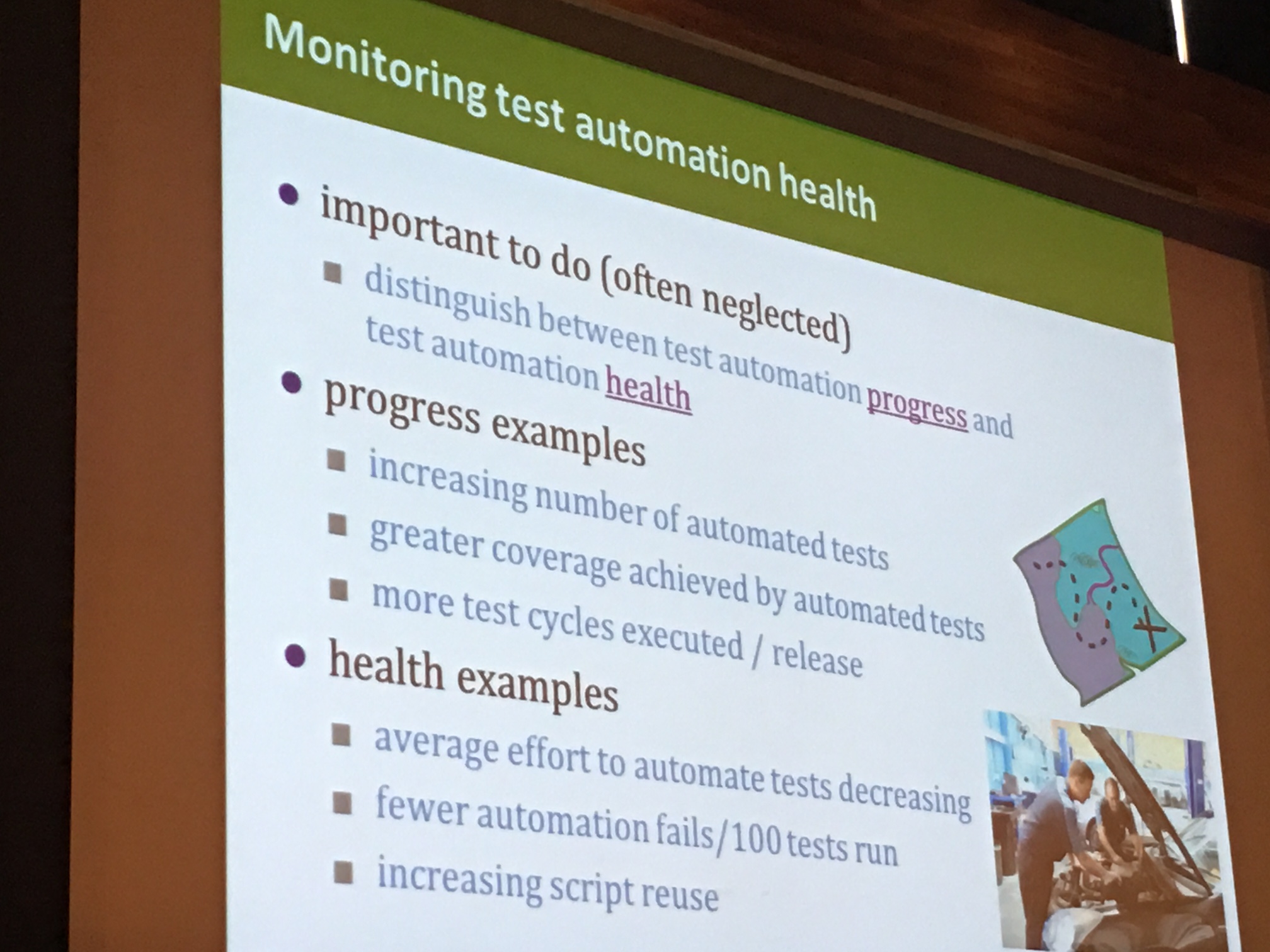

The objectives for test automation are geared towards testing, not to performance and maintenance

Test automation is not a goal in itself, but a means to the goal of giving insight in the quality of production code (also known as ‘testing’). However, this does not mean that settings goals specific to test automation can simply be forgotten as an activity. A lot of goals set by a development team (or management, if you’re still in an old-fashioned organization) are geared towards testing, not test automation. For example, you’ll often see goals such as:

- At least X % of our test cases (who uses those anymore?) should be automated

- Our test cycle time should be shortened with Y % if we implement test automation

Not only can you argue about the value of these goals themselves, but there’s something else at hand too: these goals are geared towards testing, not specific to test automation. I rarely (if at all) see goals such as:

- Our automated tests should report less than X % false negatives / false positives per run / per month

- The time it takes to implement automated tests should become X % shorter over time as our solution matures

I think these metrics are just as valuable as the ones I previously mentioned. To be honest, I think the first one is the most valuable of all.. I’d rather have no automated test than a test I do not trust.

Effects of not taking care of test automation health

The points above to me are reason enough to take good care of my test automation health. And I think it should be good enough reason for you, too. But what would happen if you don’t? Test automation code that’s not properly taken care of will cause:

- An increased risk of flaky tests whose outcome cannot be trusted, which leads to

- distrust in test automation results from your team and other stakeholders, which leads to

- risk of abandonment of the test automation solution altogether (shelfware), which leads to

- tests being executed by hand again (either in parallel with the poorly performing automated tests or instead of that), which ultimately leads to

- an increase in time required to perform the necessary tests, instead of the decrease desired when test automation was introduced

So, to wrap things up, it might be a good idea to start taking care of your test automation health. Start measuring both the quality of your test automation code (there’s lots of great tools for that) and the results they provide, for example in terms of false positives and false negatives as well as mean time required to add new checks. Make test automation health an objective in itself, and make someone (a team, or if need be a person) responsible for it. Measure, display and try to improve continually. Your test automation code will thank you for it.

"